5 Power and Cooling Warning Signs You Can't Afford to Ignore

The complexities of closing on a home sale can send chills down the spines of veteran lenders and first-time homeowners alike — unless, that is, they’re working with a company like Mortgage Connect, which provides closing and settlement services to the nation’s top lenders to make the process as painless as possible.

But although Mortgage Connect helps its customers keep their cool, maintaining the temperature of its IT infrastructure can be a challenge. The company’s rapid growth has meant regular infusions of processing power and storage capacity — upgrades that strained the cooling system in its old data center.

So when Mortgage Connect moved to a new facility, IT managers didn’t deliberate long about whether to bring along the air conditioner from the old building.

“We knew we could do things better,” says Nick Goossen, vice president of IT. Moving to a new, modern data center is just one reason IT managers might decide it’s time to rethink a company’s power and cooling strategies. But experts say it’s not the only one. Here are five signs the time has come to make a change:

Warning Sign #1: Your Server Racks Double as a Sauna

The problem with Mortgage Connect’s old cooling system wasn’t that it didn’t have the capacity to cool the company’s servers.

In fact, the overall room temperature routinely stayed within the proper range. Problems surfaced when the IT staff took spot readings of temperatures where they mattered most — behind the banks of servers, where they discovered hot spots that threatened to overheat valuable resources.

“Our cooling was inefficient because we were not able direct cooler air where it was most important,” says John Benzinger, executive vice president and CIO. “And we were unable to effectively remove the heat discharge from the back of the servers, which created hot spots.”

He and Goossen solved the problem in Mortgage Connect’s new facility with an efficient in-row cooling system that blows cold air directly into the containment aisle that holds heat from the servers.

The result: no more hot zones, and consistently comfortable temperatures throughout the facility.

Warning Sign #2: Small Problems Turn into Major Crises

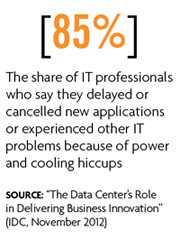

Regional catastrophes, such as Superstorm Sandy, aren’t the only threats to continuity of operations.

Everything from a brownout to a server fan that stops working can wreak havoc. When companies avoid investment in redundant equipment, they become vulnerable to one weak link bringing down a large portion of the IT operation.

The answer is what insiders call N+1 redundancy, which means each power and cooling component is supported by a backup resource. For example, in addition to a battery backup to keep servers running temporarily if the company loses power, Mortgage Connect also has a generator.

“We no longer had a single point of failure with our power source or battery backup,” Goossen says. The IT department applies the same redundancy strategy to smaller components, such as the fans that blow cool air to the hot aisle behind the server racks.

Warning Sign #3: Something’s Growing on the Data Center’s Walls

Most IT managers will occasionally check the ambient temperature of the data center and maybe the micro-climates close to the server racks. But doing so offers only a rudimentary measure of atmospheric health.

A better approach is to record not only temperatures, but also humidity levels and other readings at various times of the day, says David Hutchison, senior partner and founder of Excipio, a consulting firm that specializes in IT power and cooling strategies.

“One day you may find that you have 95 percent relative humidity, but unless you track the readings over time, you won’t know whether that’s good or bad until you start to see mold on the walls,” he says. It’s more likely it won’t be apparent until a server stops working reliably, he adds.

“Whether you monitor conditions remotely or you have someone walk through the data center once an hour, somebody needs to be making a log of temperature, humidity and the status of the uninterruptible power supplies,” he says. “Once you have that trend data, you can start to spot anomalies.”

Warning Sign #4: You Start Ignoring the Warning Signs

IT managers acknowledge that the proper care and maintenance of an entire power and cooling environment takes time and effort — sometimes more than a busy IT shop can muster. Some savvy techies have the answer: They hire a managed services provider.

Companies can pay a monthly service fee to a provider to monitor the health of its power and cooling units from a central location. If an individual battery module on a UPS shows signs of trouble, for example, a technician can troubleshoot the problem.

Additional service providers can be contracted to monitor the prevailing status of the data center air to make sure everything conforms to the service-level agreement. When the power and cooling systems are running properly, these services also handle the routine care and maintenance of the equipment.

Warning Sign #5: Your Data Center Is Always Busting at the Seams

When Shearer’s Foods, a manufacturer of kettle-cooked potato chips and other snacks, moved its corporate headquarters, it made sure its new data center had room to grow. The modern facility uses the latest technologies for hot-aisle containment and in-row cooling. And when it installed roof-mounted air condensers that generate refrigerated gas to cool servers, the company included extra wiring and other provisions to make it easier to add capacity as demand grows.

Shearer’s Foods also built two rows to house server-cooling units, although only one row is currently being used. This gives the IT staff much more flexibility than in the past, when all it had to work with was a single 20-ton air conditioner. “We’re now in a position where we can scale up easily based on our needs,” says IT Director Greg Sprutte.